Quickstart

Build and run your first Pipecat voice application

Available SDKs

JavaScript

Core SDK for web applications

React

Hooks and components for React apps

React Native

iOS and Android via React Native

iOS

Native Swift SDK for iOS

Android

Native Kotlin SDK for Android

C++

Native SDK for desktop and embedded

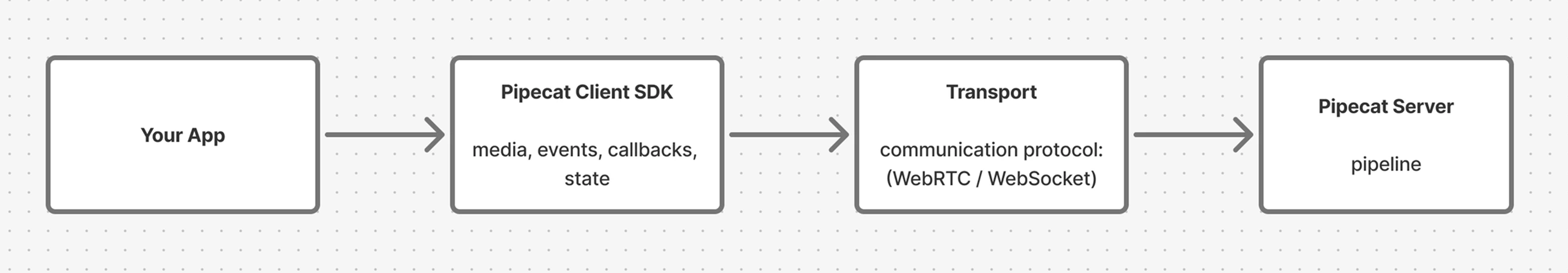

How It Fits Together

Pipecat’s architecture is split between server and client. The server runs your AI pipeline — speech recognition, LLM, text-to-speech — and the client connects your user to it over a real-time transport.

What the SDKs Handle

- Transport management — establishing, maintaining, and tearing down connections

- Media capture — microphone and camera device access and streaming

- Audio playback — rendering bot audio output

- Session state — tracking connection state and bot readiness

- Event stream — callbacks and events for transcriptions, bot speaking, errors, and more

- Messaging — sending custom messages to your bot and handling responses

Ready to Build?

Quickstart

Get a voice conversation running in minutes

Core Concepts

Understand transports, sessions, events, and media

API Reference

Full reference for all SDK methods, hooks, and callbacks

Examples

Complete example applications across all platforms